Harvesting Air Quality Data with a NodeMCU, SensorWeb and IFTTT

Posted: July 13, 2016 Filed under: iot, mozilla, Uncategorized | Tags: arduino, ifttt, iot, mozilla 1 CommentProject SensorWeb is an experiment from the Connected Devices group at Mozilla in open publishing of environmental data. I am excited about this experiment because we’ve had some serious air quality discoveries in Portland recently – our air is possibly the worst in the USA, and bad enough that mega-activists like Erin Brockovich are getting involved.

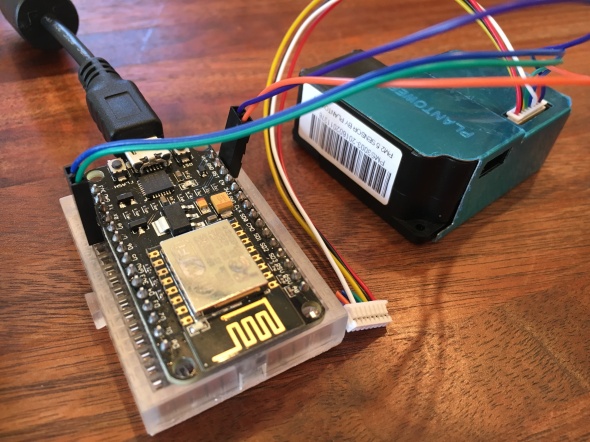

A couple of weeks ago, Eddie and Evan from Project SensorWeb helped me put together a NodeMCU board and a PM2.5 sensor so I could set up an air quality sensor in Portland to report to their network. They’re still setting up the project so I haven’t gotten the configuration info from them yet…

But you don’t need the SensorWeb server to get your sensor up and running and pushing data to your own server! I want a copy of the data for myself anyway, to be able to do my own visualizations and notifications. I can then forward the data on to SensorWeb.

So I started by flashing the current version of the SensorWeb code to the device, which is a Nodemcu 0.9 board with an ESP8266 wifi chip on board, and a PM2.5 sensor attached to it.

I used Kumar Rishav’s excellent step-by-step post to get through the process.

Some things I learned along the way:

- On Mac OS X you need a serial port driver in order for the Arduino IDE to detect the board.

- After much gnashing of teeth, I discovered that you can’t have the PM2.5 sensor plugged into the board when you flash it.

After getting the regular version flashed correctly, I tested with Kumar’s API key and device id, and confirmed it was reporting the data correctly to the SensorWeb server.

Now for the changes.

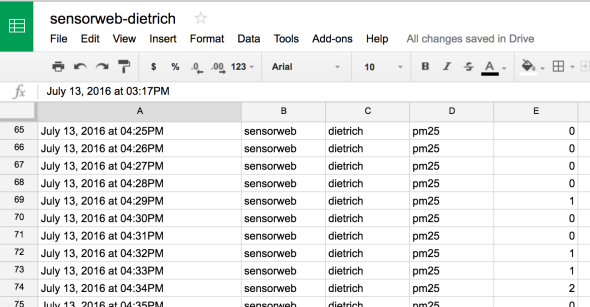

- I set up the Maker channel on IFTTT, which allows me post data to an HTTP endpoint to get it into IFTTT’s system.

- I then created a new IFTTT recipe that accepts the data from the device and pushes it into a Google spreadsheet.

- I forked the SensorWeb code and modified it to post to the Maker channel instead of the SensorWeb server.

I flashed the device and viola, it is publishing data to my spreadsheet.

And now once SensorWeb is ready to take new devices, I can set up a new IFTTT recipe to forward the posts to them, allowing me to own my own data and also publish to the project!

Bubble and Tweak – IoT at the Ends of the Earth

Posted: July 12, 2016 Filed under: iot, mozilla, Uncategorized | Tags: anstruther, iot, mozilla, scotland 2 Comments

Last month I spent a week working on IoT projects with a group of 40 researchers, designers and coders… in Anstruther, a small fishing village in Scotland. Not a high-tech hub, but that was the point. We immersed ourselves in a small community with limited connectivity and interesting weather (and fantastic F&C) in order to explore how they use technology and how ubiquitous physical computing might be woven into their lives.

The ideamonsters behind this event were Michelle Thorne and Jon Rogers, who are putting on a series of these exploratory events around the world this year as part of the Mozilla Foundation’s Open IoT Studio. The two previous editions of this event were a train caravan in India and a fablab sprint in Berlin (which I also attended, and will write up as well. I SWEAR.).

Michelle and Jon will be writing a proper summary of the week as a whole, so I’m going to focus on the project my group built: Bubble.

Context

From research conducted with local fishing folk, farmers from a bit inland, and a group of teenagers from the local school, we figured out a few things:

- Mobile connectivity is sparse and unreliable throughout the whole region. In this particular town, only about half the town had any service.

- The important information, places and things aren’t immediately obvious unless you know a local.

- Just like I was, growing up in a small town: The kids are just looking for something to do.

Initially we focused on the teens… fun things like virtual secret messaging at the red telephone boxes. Imagine you connect to the wifi at the phone box, and the captive portal is a web UI for leaving and receiving secret messages. Perhaps they’re only read once before dying, like a hyperlocal Snapchat. Perhaps the system is user-less, mediated only by secret combinations of emojis as keys. The street corner becomes the hangout, physically and digitally.

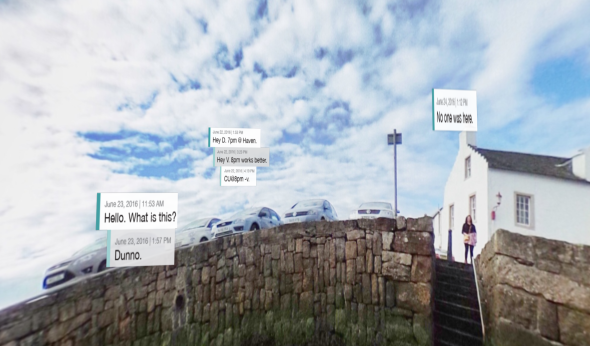

We meandered to public messaging from there, thinking about how there’s so much to learn and share about the physical space. What’s the story behind the messages to fairies that are being left in that phone box? I can see the island off the coast from here – what’s it called and what’s out there? Who the hell is Urquardt and what’s a “wynd”? Maybe we make a public message board as well – disconnected from the internet but connected to anything within view.

We kept going back to the physical space. We talked about a virtual graffiti wall, and then started exploring AR and ways of marking up the surroundings – the people, the history, the local pro-tip on which fish and chips shop is the best. But all of this available only to people in close physical proximity.

Implementation

Given the context and the constraints, as well as watching direction some of the other groups were going in, we started designing a general approach to bringing digital interactivity to disconnected spaces.

The first cut is Bubble: A wi-fi access point with a captive portal that opens a web page that displays an augmented reality view of your immediate surroundings, with messages overlaid on what you’re seeing:

A few implementation notes:

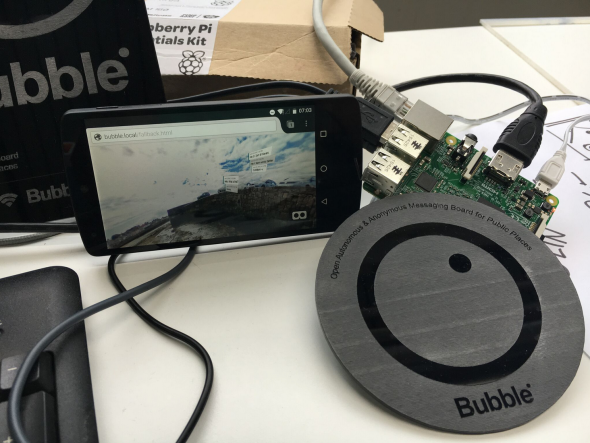

- We used a Raspberry Pi 3, running as a wi-fi access point.

- It ran a node.js script that served up the captive portal web UI.

- The web UI used getUserMedia to access the device camera awe.js for the AR bits and A-Frame for a VR backup view on iOS.

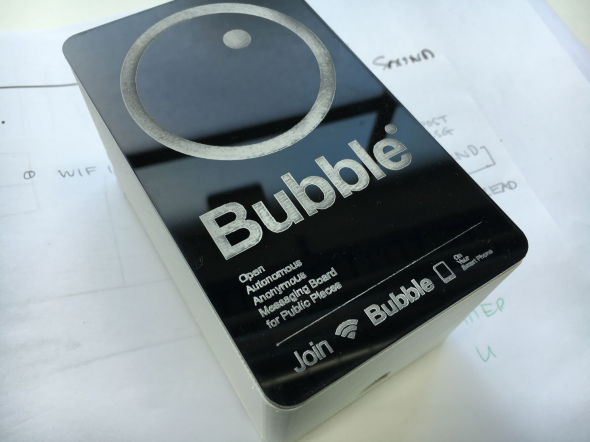

- We designed a logo and descriptive text and then lasercut some plaques to put up where hotspots are.

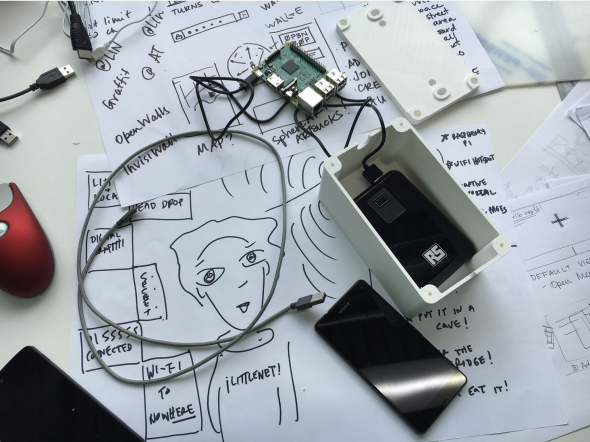

Designs, board, battery and boxes:

Challenges:

- Captive portals are hobbled web pages. You can’t do things like use getUserMedia to get access to the camera.

- iOS doesn’t have *any* way to let web pages access the camera.

- Power can be hard. We talked about solar and other ways of powering these.

- Gotta hope they don’t get nicked.

Bubble was an experimental prototype. There are no plans to work further on it at this time. If you’re interested, everything is on Github here. You can read more about the design here (PDF).

Thanks to fellow team members Julia Gratsova, Katie Caldwell, Vladan Joler. (Sadly, no Julia in the phonebox!)